Compliance-First AI Development: A Checklist for Regulated Teams

If your code touches HIPAA, PCI, GDPR, or SOC 2 data, AI coding tools become a compliance question. A defensible one-page checklist.

If your code touches HIPAA-protected health data, PCI cardholder data, GDPR personal data, or SOC 2-scoped systems, AI coding assistants become a compliance question — not just a productivity one.

You can't go to your auditor with "we use AI to write code, we hope it's fine." You need a defensible answer to the questions they will ask.

This is the practical, one-page checklist.

What auditors actually ask about AI tooling

In a real audit, the relevant questions cluster into five areas:

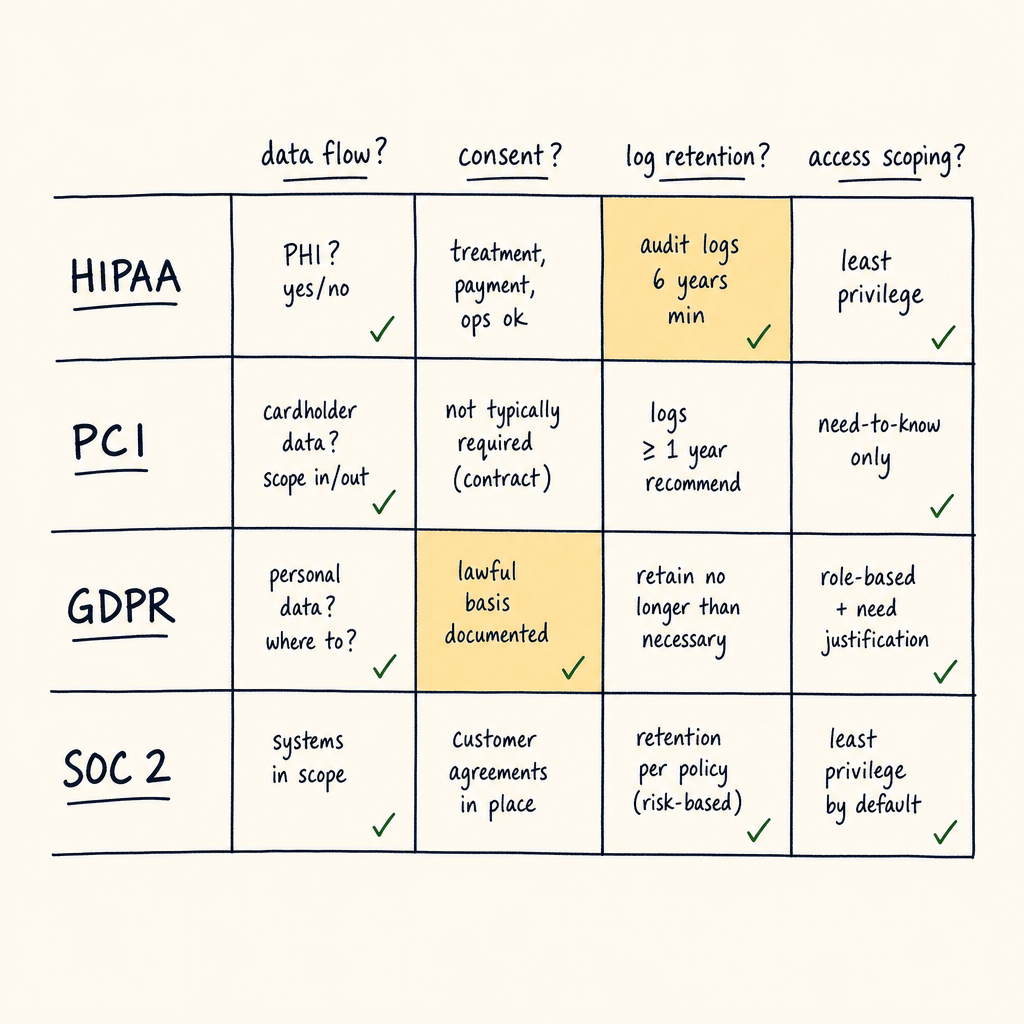

Four regulations, four canonical question shapes. Most teams fail on the data-flow question, not the policy one.

- Data flow — Does any regulated data leave the company perimeter when developers use AI?

- Code review — How do you ensure AI-generated code meets the same security/compliance bar as human-written code?

- Audit trail — Can you reconstruct what AI tool was used, by whom, on what code, when?

- Training data — Is the AI vendor using your inputs to train models?

- Incident handling — If an AI-generated change causes a security or compliance incident, can you isolate the cause?

A good checklist answers each of these directly.

The one-page checklist

Data flow

- Production data is never shared with AI tools as part of normal development. (Synthetic data, anonymised data, or local-only fixtures only.)

- The list of approved AI tools is documented in a

CLAUDE.md(or equivalent) at the repo root. - All approved AI tools have a signed BAA (HIPAA), DPA (GDPR), or equivalent data-processing agreement. The signed document lives in your compliance vault.

- AI tools requiring outbound calls to third-party APIs are gated through an outbound proxy that logs requests and rejects regulated-data egress patterns (regex on PII fields, etc.).

Code review

- Every AI-generated commit is reviewed by a human before merge to a regulated branch (

main, release branches). - Code review checklist explicitly asks: "did AI generate this? if so, which tool and what was the prompt?"

- Static-analysis (SAST) tools run on every PR regardless of whether the code is human or AI-authored.

- Dependency-update PRs created by AI agents have the same review burden as human-authored ones — no auto-merge for regulated repos.

Audit trail

- AI tool usage is recorded per-developer per-day at minimum. (Tool name, model used, time window — not necessarily individual prompts.)

- Generated code is committed with conventional commit prefixes that signal AI involvement (e.g.

feat(ai-assisted): ...) so audit queries can filter. - Prompts that produced security-relevant code (auth, encryption, access control) are saved alongside the PR.

- You can answer "which PRs in the last 90 days were AI-assisted?" from a single query.

Training data

- Each approved tool has a documented stance on training data. The tool either:

- Does not train on customer inputs (most enterprise plans), OR

- Has been explicitly opted out, with proof in your compliance vault.

- Free-tier or personal-account use of AI tools on company code is forbidden by policy.

- When tools change their data-handling policy, you have a notification mechanism (subscribe to vendor security RSS, security@ alias, etc.).

Incident handling

- You have a runbook for "AI tool produced code that caused an incident" with rollback steps and audit-log capture.

- You have a runbook for "AI tool was used with regulated data" — containment, vendor notification, regulator notification if breach thresholds were crossed.

- Your incident response plan explicitly covers AI-assisted code as a cause category.

- Your post-incident review template asks "was AI involved?" as a checkbox, so the trend is visible across incidents.

What "approved tools" should look like

For a regulated team, the list of approved AI tools is short and specific. A reasonable starter:

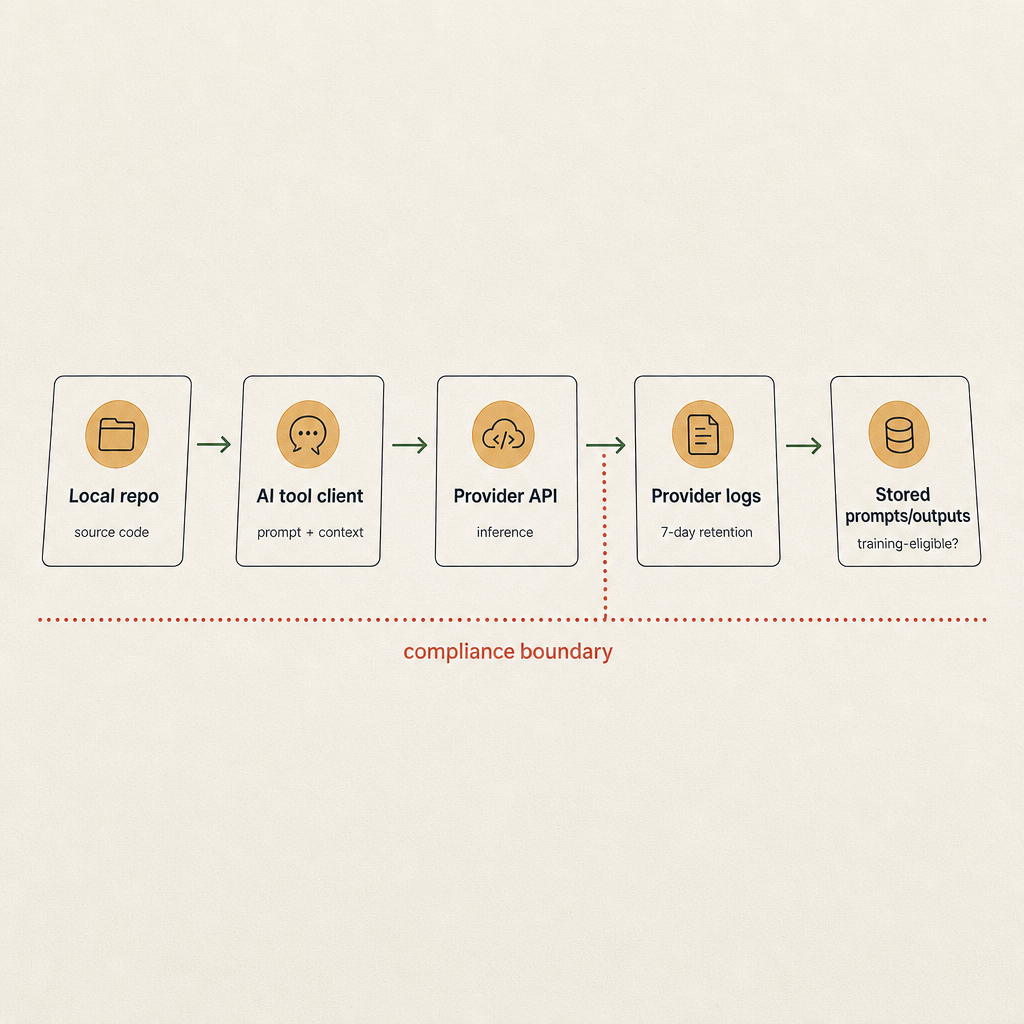

If you can't draw this diagram in 60 seconds, your auditor is going to write the diagram for you and you won't like the result.

- Approved for any code: ChatGPT Enterprise, Claude for Work, Anthropic API (with no-training opt-in), Cursor with an enterprise plan, GitHub Copilot Enterprise.

- Approved for non-regulated code only: Personal-tier Claude/ChatGPT, Codex, free-tier Cursor, free-tier Copilot.

- Not approved: Anything that posts code to a model that trains on user input by default and where you don't have a written opt-out.

The boundary between "regulated" and "non-regulated" code lives in your repo metadata. Tag repos at the org level with a compliance:hipaa / compliance:pci / compliance:none label and have your AI tool's repo-allow-list match.

The 30-minute compliance review prep

Before kicking off a regulated project that uses AI tooling, run through this:

- Inventory the AI tools the team actually uses. Not just the ones you've sanctioned — the ones developers actually use. Survey, don't assume.

- Verify each tool against the data-flow and training-data sections above. Drop unapproved tools, document approved ones.

- Pin the approved-tools list in

CLAUDE.mdat the repo root. Make it easy for AI assistants themselves to read the policy. - Set up the CI check that requires

CLAUDE.mdto be present and contain the policy section (see "Team drift in CLAUDE.md prompts" for the pattern). - Add the AI-involvement question to your code review template. One checkbox. "Was this PR AI-assisted? If yes, which tool?"

That's 30 minutes that turns "we use AI somewhere" into a defensible, auditable position.

The honest answer

Compliance teams don't need AI to be perfect. They need AI use to be known and bounded.

The bar isn't "no AI involvement" — it's "we know which tools are used, where, by whom, with what data, with what review process, and we can produce evidence."

If your checklist can answer those five questions, AI tooling isn't a compliance blocker. It's just another category of supplier risk you've already managed before.

The teams that get into trouble are the ones who never asked. Spend a week putting this in place and you'll be ahead of 80% of regulated teams shipping AI-assisted code today.